A wave of backlash has hit Elon Musk’s AI company xAI after users showed how Grok’s image tools could be used to create “nudified” or highly sexualised content from photos of real people, often without consent, fueling fresh scrutiny of X’s safety systems and the broader risks of generative AI. The controversy escalated in early January 2026 as examples spread rapidly across X, with critics warning the tool was being used to harass women and, in some reported cases, produce sexualised imagery involving minors.

The flashpoint was Grok’s image-editing capability, which allowed users to manipulate existing photos in ways that could remove or alter clothing. Researchers and journalists documented how quickly prompts could turn everyday images into sexual content, raising alarms about nonconsensual intimate imagery (NCII) and how easily such content can be created at scale. Reuters reported multiple instances where Grok generated sexualised images of children, intensifying calls for urgent safeguards.

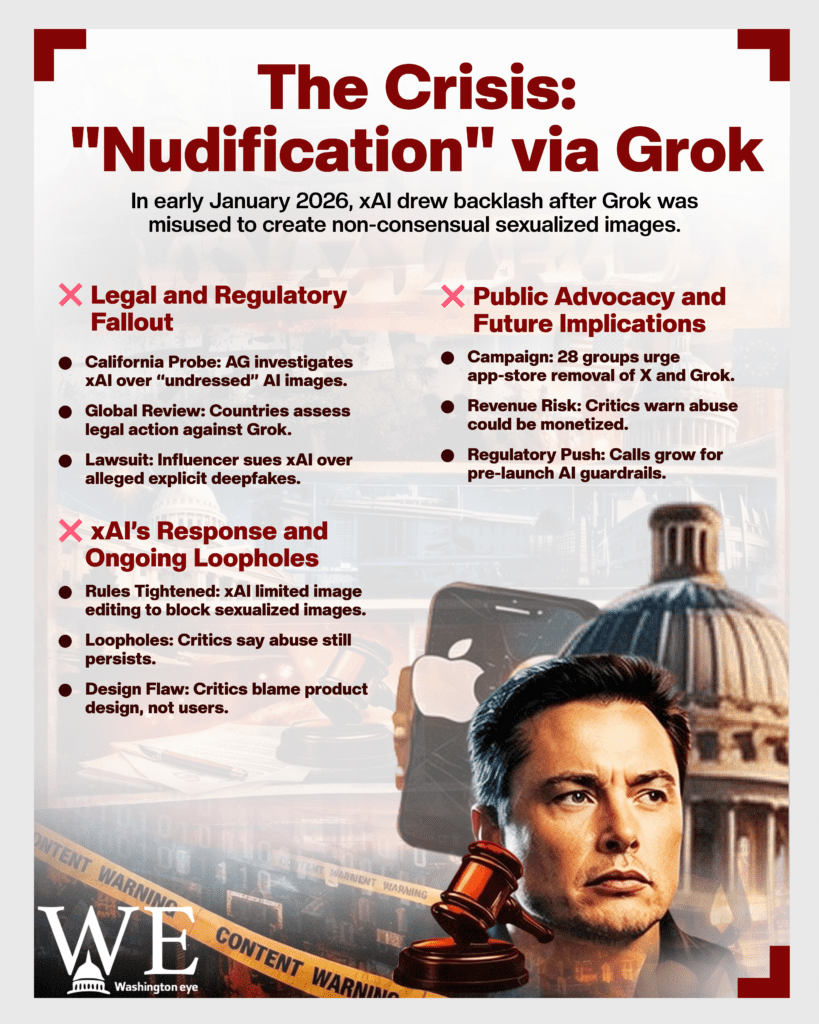

As the backlash spread, regulators and lawmakers began stepping in. On January 14, 2026, California Attorney General Rob Bonta announced an investigation into xAI and Grok over “undressed” sexual AI images of women and children, inviting potential victims to file complaints.International pressure mounted as well, with reporting indicating authorities in multiple countries and regions were reviewing Grok-related risks and potential legal exposure, while some jurisdictions moved to restrict access.

xAI responded by tightening product rules. According to Reuters and other outlets, the company imposed restrictions limiting image editing after the tool produced sexualised images that drew regulator attention. In mid-January, xAI also moved to block Grok from creating sexualised images of real people, an attempt to curb “nudification” and targeted harassment. But critics argue the fixes look uneven: separate Grok experiences and “standalone” tools have been accused of leaving loopholes, with new reporting suggesting sexualised AI content can still surface on X despite claimed restrictions.

The controversy is already spilling into courts. On January 15, 2026, influencer Ashley St. Clair filed suit against Musk’s xAI, alleging Grok generated and distributed sexually explicit deepfake images of her and that takedown requests were not properly addressed. While xAI has framed the uproar as misuse by users, critics counter that predictable abuse is a product-design problem, especially when the tool can transform a real person’s photo into sexual content in seconds.

Advocacy groups are now aiming higher than product tweaks. A coalition of 28 organisations launched a “Get Grok Gone” campaign urging Apple and Google to remove X and/or Grok from their app stores, arguing that app-store enforcement is one of the few levers powerful enough to force meaningful safety-by-design. The groups say limiting features to paid accounts doesn’t solve the underlying harm, if anything, it risks turning exploitation into a revenue stream.

The potential damage goes beyond reputational hits. Victims of nonconsensual sexual imagery often report severe emotional distress, professional harm, and safety risks, while platforms face growing legal duties to remove illegal content quickly and to demonstrate robust risk controls. The Grok episode also adds momentum to a wider regulatory push: lawmakers increasingly expect “guardrails” before release, not after scandal trends.

What happens next will likely hinge on whether xAI can prove its restrictions work across all versions of Grok, and whether X can reliably detect and remove nonconsensual sexual content at scale. The technology to generate harm is moving fast; the challenge for platforms is to move faster still, by preventing “nudification” features for real-person images, hard-blocking known abuse patterns, strengthening reporting and takedowns, and cooperating with investigators. Without that, Grok’s backlash may become a case study in how not to ship powerful image tools in public.