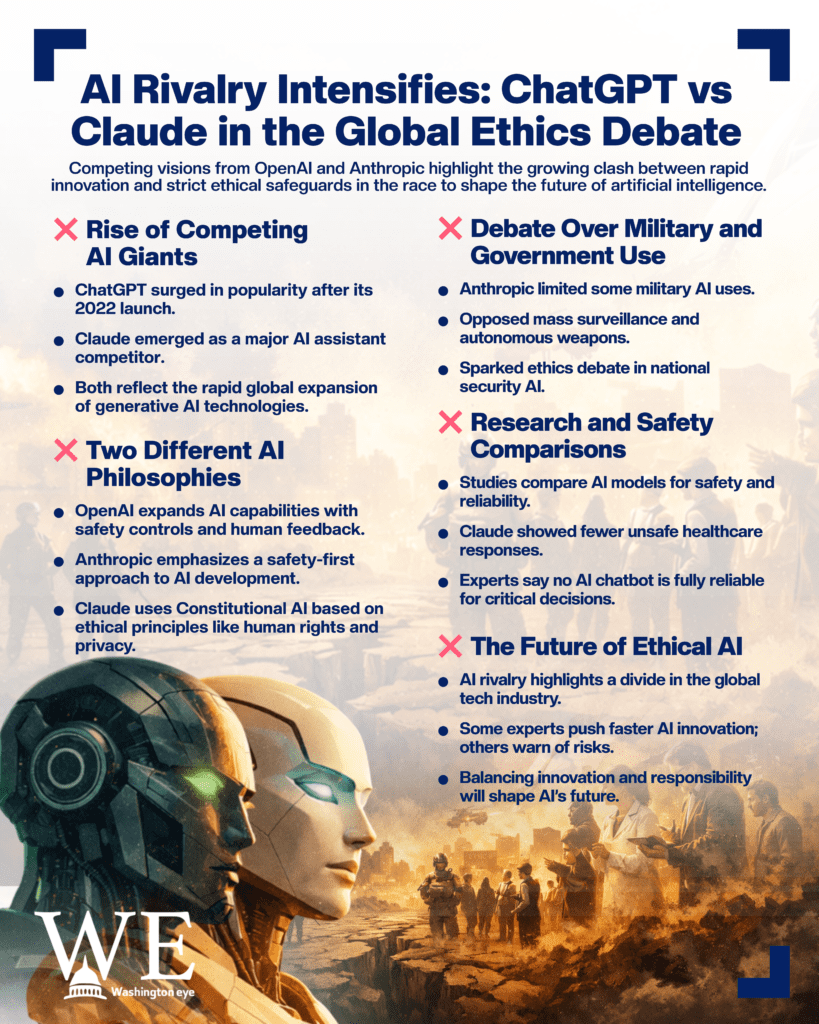

The global race to dominate artificial intelligence (AI) is increasingly being framed as a battle not only of technology but also of ethics. Two of the most prominent AI systems, ChatGPT and Claude, have emerged at the center of this debate. Developed by different companies with contrasting philosophies, the rivalry between them has sparked discussions among governments, technology experts, and millions of users worldwide about the future of ethical AI.

ChatGPT, developed by the U.S.-based company OpenAI and led by CEO Sam Altman, was launched publicly in late 2022 and rapidly became one of the most widely used AI chatbots in history. The tool can generate text, analyze data, and assist users in fields ranging from education and journalism to coding and marketing. Meanwhile, Claude, an AI assistant developed by the research company Anthropic, entered the market as a strong competitor, emphasizing safety and ethical guardrails in its design. Both tools are part of a broader wave of generative AI technologies transforming industries globally.

The debate between the two AI systems intensified in 2025 and 2026 as governments and corporations began adopting AI tools for large-scale operations. At the heart of the rivalry lies a key question: how should artificial intelligence be developed and used responsibly? Anthropic has positioned Claude as an “ethical alternative” to competing AI systems, highlighting safety and transparency as central priorities. The company developed a method called “Constitutional AI,” which trains the model using a set of guiding principles such as respect for human rights and privacy. This approach encourages the AI to refuse harmful or unethical requests.

OpenAI, on the other hand, has focused on expanding AI capabilities while maintaining safeguards through human feedback and moderation systems. ChatGPT is known for its versatility, creative writing ability, and integration with other digital tools. Experts say OpenAI’s strategy emphasizes rapid innovation combined with safety measures, while Anthropic tends to prioritize risk reduction before expanding its systems.

The rivalry escalated recently when political and military decisions in the United States brought the issue of ethical AI to the forefront. In early 2026, tensions emerged after Anthropic reportedly refused to allow its AI technology to be used for certain military purposes such as mass surveillance or autonomous weapons. This stance created friction with government officials and defense agencies seeking broader AI capabilities for national security applications.

Following the dispute, several U.S. federal agencies decided to discontinue the use of Anthropic’s AI products and shift to competing platforms, including OpenAI’s systems. The move triggered a broader debate across the technology sector about whether ethical restrictions in AI development help protect society or limit innovation.

Despite the political tensions, the controversy also boosted public interest in Claude. Reports indicated that downloads of the chatbot surged globally after the dispute became public. Some celebrities and technology users publicly switched to the platform, praising its ethical stance and transparency.

Researchers and academics have also been studying the ethical behavior of large language models. Studies show that both Claude and ChatGPT perform differently in areas such as bias, medical advice, and decision-making scenarios. In one evaluation involving healthcare questions, Claude produced fewer unsafe responses than several other AI systems, although researchers emphasize that all chatbots still require improvement before they can be trusted with critical decisions.

The rivalry between the two AI platforms reflects a broader ideological divide within the technology industry. Some developers advocate accelerating AI innovation quickly to solve global challenges such as disease, poverty, and climate change. Others warn that advanced AI could pose risks ranging from misinformation and economic disruption to potential misuse by authoritarian governments or military systems.

For everyday users, however, the competition has largely resulted in rapid improvements in AI capabilities. Millions of people now rely on tools like ChatGPT and Claude for writing assistance, research support, and daily productivity. Companies are racing to release more advanced models with better reasoning abilities, longer context windows, and improved safety systems.

As the technology evolves, experts say the key challenge will be balancing innovation with responsibility. Whether ChatGPT’s approach of rapid development or Claude’s philosophy of strict ethical safeguards ultimately shapes the future of AI remains uncertain. What is clear, however, is that the battle for ethical AI is no longer just a technical debate, it is a global conversation about how humanity will control and coexist with intelligent machines.